High-Performance Thermodynamic Hardware Maintenance Without Unplanned Downtime

For after-sales maintenance teams, High-Performance Thermodynamic Hardware maintenance is no longer just about fixing equipment—it is about protecting uptime, precision, and compliance in mission-critical environments. This guide explores practical strategies to maintain thermal systems proactively, reduce failure risks, and support stable operations without unplanned downtime across advanced industrial facilities.

Why is High-Performance Thermodynamic Hardware maintenance now a strategic issue instead of a routine service task?

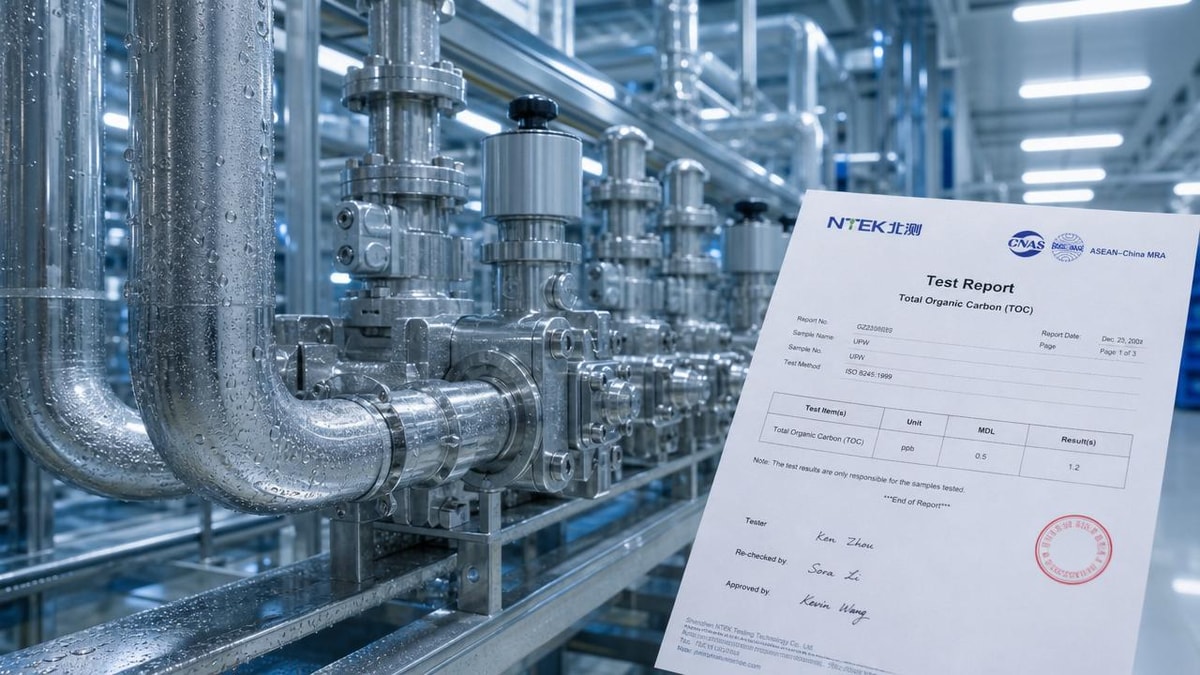

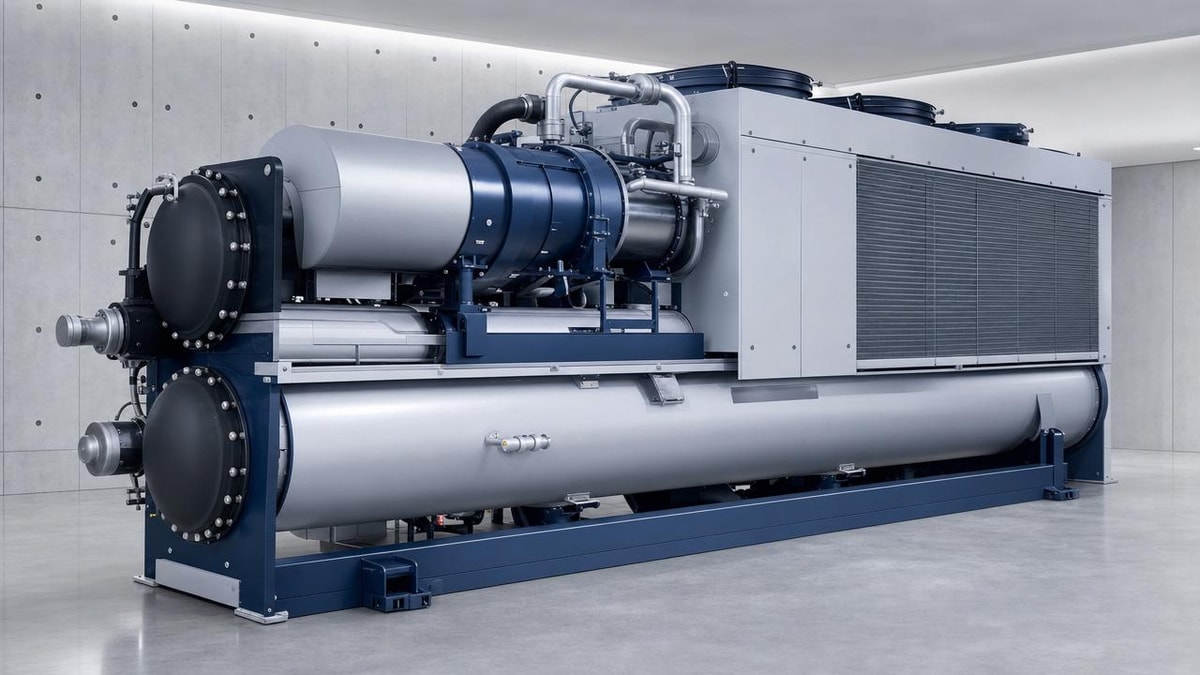

In advanced facilities, thermodynamic hardware sits at the center of operational stability. Chillers, precision HVAC units, heat exchangers, process cooling loops, filtration-integrated air handling systems, pumps, valves, sensors, and control modules do far more than move heat. They protect cleanroom integrity, maintain product quality, stabilize laboratory conditions, and support compliance with standards such as ISO 14644, ASHRAE guidance, and internal quality protocols.

For after-sales teams, this changes the maintenance mindset. A reactive approach may be acceptable for comfort cooling in ordinary buildings, but it is costly in semiconductor fabs, biopharma facilities, containment laboratories, electronics assembly lines, data-intensive environments, and other precision-driven sites. In these settings, a minor drift in temperature, airflow, pressure, or fluid purity can trigger yield loss, batch rejection, contamination risk, or audit findings long before a complete shutdown occurs.

That is why High-Performance Thermodynamic Hardware maintenance must be treated as a reliability program. The goal is not simply to repair failed assets. The goal is to predict failure modes early, preserve design performance, and coordinate service actions in a way that avoids unplanned downtime. This is especially important in multidisciplinary industrial environments where thermal management intersects with contamination control, biosafety, process water quality, and digital building controls.

Which systems and operating environments need the most disciplined maintenance approach?

Not every thermal asset requires the same level of intervention. After-sales maintenance personnel should prioritize systems where performance deviation has a direct effect on process continuity, compliance, or safety. These usually include precision chillers, low-temperature process loops, variable-speed air handling equipment, fan filter units, condenser networks, closed-loop cooling systems, ultra-clean ventilation infrastructure, and redundant environmental control systems tied to alarms or building management platforms.

The most demanding environments share three characteristics. First, they operate within narrow tolerances, sometimes at extremely small temperature or humidity ranges. Second, they depend on interconnected hardware, so one weak component can cascade through the system. Third, service windows are limited because production schedules, containment requirements, or controlled-environment protocols restrict intervention time.

In practical terms, the strongest candidates for advanced High-Performance Thermodynamic Hardware maintenance are facilities where downtime costs are high, restart procedures are complex, or environmental drift creates hidden quality loss. This includes not only high-tech sectors but also any industrial setting where thermal precision, fluid integrity, and monitored air performance affect output and compliance.

What are the first warning signs that maintenance is becoming too reactive?

Reactive maintenance rarely starts with a dramatic breakdown. It usually reveals itself through recurring minor symptoms. After-sales teams should watch for rising alarm frequency, longer system recovery times, unexplained energy increases, repeat service calls for the same subsystem, unstable supply temperatures, sensor mismatch, filter loading that accelerates faster than expected, abnormal vibration, valve hunting, or pressure imbalance between designed zones.

Another warning sign is dependence on technician experience without sufficient data history. If a site can only stay stable because a few senior engineers “know the sound” or “know the pattern,” the maintenance system is vulnerable. A disciplined program requires trend logs, failure history, calibration records, and documented root-cause analysis. Without this, maintenance becomes anecdotal and difficult to scale across shifts, sites, or contractors.

Spare-part behavior also tells a story. If emergency replacement parts are frequently rushed in, or if the same consumables fail sooner than expected, the issue may not be part quality alone. It may indicate hidden thermal stress, contamination, improper balancing, poor water chemistry, or control instability. In other words, the symptom may be mechanical, but the root cause is often systemic.

How can after-sales teams reduce unplanned downtime in day-to-day High-Performance Thermodynamic Hardware maintenance?

The most effective way is to move from calendar-only servicing to risk-based maintenance planning. Calendar schedules remain useful for standard inspections, lubrication, cleaning, and filter replacement, but critical assets should also be evaluated by load profile, process importance, historical drift, and failure consequence. A chiller supporting a controlled process room should not be maintained with the same logic as a non-critical utility unit.

A practical workflow often includes four layers. The first is baseline verification: confirm that actual performance still aligns with design intent for temperature, pressure, flow, cleanliness, and power draw. The second is trend monitoring: compare operating data over time to detect degradation before alarms escalate. The third is intervention planning: bundle service tasks into approved windows and isolate impact through redundancy or staged switchover. The fourth is post-maintenance validation: prove that the system returned to stable operation, not just that the task was completed.

Communication is equally important. High-Performance Thermodynamic Hardware maintenance fails when service teams, facility operators, production managers, and compliance stakeholders work from different priorities. The maintenance team may want a shutdown for preventive work, while operations may resist because no visible failure exists. This conflict can be reduced by translating maintenance findings into business language: expected uptime risk, potential quality impact, likely recovery time, and compliance exposure.

Quick maintenance judgment table for after-sales teams

Use the following table to decide whether a condition needs immediate action, short-term planning, or routine observation.

What data should be tracked to make High-Performance Thermodynamic Hardware maintenance more predictive?

Predictive maintenance is only useful when the selected data reflects real failure behavior. After-sales teams should focus on parameters that reveal efficiency loss, instability, contamination risk, and control deviation. Typical indicators include entering and leaving fluid temperatures, differential pressure, flow rate, compressor runtime, motor current, vibration trend, humidity stability, filter pressure drop, valve position behavior, heat exchanger approach temperature, and alarm event frequency.

However, raw data alone does not prevent downtime. Teams need thresholds that distinguish acceptable variation from meaningful degradation. For example, a slight increase in power draw may be normal during seasonal change, but a steadily rising approach temperature in a tightly controlled loop often points to fouling or heat transfer decline. Similarly, one isolated sensor anomaly may not justify intervention, but repeated mismatches between process readings and room conditions often indicate calibration drift or control architecture problems.

This is where digital monitoring and historical benchmarking matter. In organizations influenced by G-ICE-style performance governance, maintenance decisions are strengthened by comparing asset behavior against design intent, environmental specifications, and known best-practice ranges. That turns service activity into evidence-based asset stewardship rather than generic troubleshooting.

What mistakes most often increase failure risk during maintenance itself?

One common mistake is replacing components without validating the surrounding cause. A failed sensor may be changed, but if wiring shielding, moisture intrusion, software mapping, or process vibration is ignored, the same problem returns. Another mistake is treating cleaning as a universal fix. Cleaning coils, strainers, or filters is necessary, but over-cleaning, improper chemical use, or contamination introduced during service can damage high-performance systems or compromise sensitive environments.

A third error is neglecting recommissioning checks after intervention. In mission-critical systems, maintenance is incomplete until control logic, alarms, sequencing, balancing, and recovery performance are verified. If a valve actuator is replaced but stroke calibration is skipped, the hardware may appear functional while still undermining precision control. Likewise, if a standby unit is never load-tested, redundancy exists on paper but not in practice.

Documentation failures also create long-term risk. High-Performance Thermodynamic Hardware maintenance should produce traceable records that show what was observed, what was changed, what values were measured before and after service, and what residual risks remain. This is essential for regulated environments, handovers between shifts, and future root-cause investigations.

How should after-sales teams balance maintenance cost, spare parts, and uptime expectations?

The lowest service cost is rarely the lowest operating cost. In high-value facilities, delaying maintenance to save budget can lead to emergency callouts, product loss, compliance disruption, and inefficient energy use. That said, overservicing also wastes resources and can introduce unnecessary intervention risk. The right balance comes from criticality ranking.

Start by separating assets into critical, important, and support categories. For critical equipment, keep validated spare parts, calibrated instruments, and clear escalation procedures available. For important systems, maintain condition-based inspection and planned replacement intervals. For support assets, standard preventive routines may be sufficient. This segmentation helps after-sales teams justify inventory, service frequency, and staffing levels without applying the same expense to every component.

It is also smart to evaluate parts by lead time and operational consequence, not just by price. A relatively inexpensive sensor with a long delivery delay may deserve shelf stock if its failure can stop production. Conversely, some high-cost assemblies may not need local storage if redundancy and response planning are strong. Good High-Performance Thermodynamic Hardware maintenance links parts planning to business continuity, not only procurement habit.

Before choosing a maintenance partner, upgrade path, or service plan, what questions should be answered first?

After-sales teams and facility leaders should begin with operational clarity. What environmental tolerances are truly critical? Which assets support those tolerances? What failure modes have already occurred, and which ones are still poorly understood? How much downtime is acceptable for each subsystem, and what does recovery actually involve? These questions help define service scope far better than generic maintenance packages.

Next, confirm technical readiness. Are there accurate as-built records, control sequences, sensor maps, trend logs, and spare-part lists? Can the maintenance provider support precision environments, regulated documentation, and post-service validation? Do they understand interactions between thermal performance, contamination control, water quality, and digital monitoring? In modern industrial environments, a provider who only repairs equipment but cannot interpret system interdependencies may not reduce risk effectively.

Finally, define improvement targets. The most useful service conversations focus on measurable outcomes: fewer emergency stoppages, better thermal stability, lower alarm frequency, improved energy efficiency, stronger compliance traceability, or faster mean time to recovery. If further confirmation is needed on a specific solution, parameter set, implementation timeline, budget range, or cooperation model, priority questions should include asset criticality, existing failure history, operating tolerances, available downtime windows, monitoring capability, spare-part risks, and validation requirements after maintenance. That is the foundation for High-Performance Thermodynamic Hardware maintenance that protects uptime without sacrificing precision or compliance.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.