Sub-Micron Contamination Measurement: What Really Affects Accuracy?

In high-spec environments, Sub-Micron Contamination measurement is only as reliable as the conditions, instruments, and protocols behind it. For technical evaluators comparing cleanroom and process-control performance, even minor deviations in airflow, calibration, sampling location, or environmental stability can distort results. Understanding what truly affects accuracy is essential to making defensible engineering and compliance decisions.

For most technical assessment teams, the central question is not whether particle data can be collected, but whether that data is trustworthy enough to support qualification, root-cause analysis, procurement decisions, and audit readiness. The short answer is that accuracy is rarely determined by the particle counter alone. It is shaped by a measurement chain that includes instrument sensitivity, tubing configuration, sample timing, room state, operator practice, and alignment with standards such as ISO 14644 and relevant SEMI or internal validation protocols.

If you are evaluating a cleanroom, minienvironment, process tool, or monitoring architecture, the most useful way to think about accuracy is this: every measurement is a system output. When that system is poorly designed or inconsistently controlled, the result may look precise while still being wrong. That distinction matters when contamination thresholds are tight enough to affect yield, sterility assurance, wafer defectivity, or biosafety performance.

What technical evaluators really need to know before trusting a result

The core search intent behind this topic is practical and decision-oriented. Readers want to know what genuinely changes the accuracy of sub-micron particle data, how to distinguish meaningful readings from misleading ones, and what to verify when comparing facilities, vendors, or environmental-control strategies. They are not looking for a generic definition of contamination measurement. They need a framework for judging data quality.

For technical evaluators, the biggest concerns are usually consistent across industries. First, can the reported measurements be reproduced under the same conditions? Second, do the readings reflect the real contamination risk at the point of use, not just a convenient sampling point? Third, were the instruments and protocols appropriate for the particle size range that matters to the process? And fourth, will the data withstand scrutiny from quality, EHS, compliance, or customer auditors?

That means the most valuable article content is not broad theory, but the specific factors that bias results upward or downward. It also means discussing how to evaluate an entire measurement approach: where samples are taken, when they are taken, how long they are taken, what the air is doing during the test, and whether environmental stability was maintained throughout. These are the details that help readers judge engineering quality instead of relying on headline numbers.

Why sub-micron accuracy fails even when the instrument is “within spec”

One of the most common misunderstandings in Sub-Micron Contamination measurement is assuming that a compliant particle counter guarantees accurate data. In reality, an instrument can be calibrated correctly and still generate misleading results if the application conditions are poor. Sub-micron particles are strongly influenced by airflow behavior, electrostatic effects, aspiration losses, probe orientation, and short-term environmental instability. At these size ranges, measurement error can come from the setup around the instrument as much as from the instrument itself.

Another issue is that “within spec” often refers to manufacturer performance under controlled calibration conditions, not to field deployment in dynamic production or validation environments. A particle counter that performs well in a lab may show very different behavior when connected to long tubing runs, exposed to pulsating process exhaust, or used in a space with turbulence near HEPA or ULPA supply zones. Technical evaluators should therefore separate instrument specification from measurement validity in actual use.

This is especially important when contamination levels are very low. At low particle concentrations, statistical confidence becomes a limiting factor. A single short sample may not provide enough counts to support strong conclusions. In these cases, apparent differences between rooms, tools, or operating states may reflect sampling noise rather than true environmental divergence. Accuracy is not only about sensor capability; it is also about whether the sampling plan was robust enough to capture representative conditions.

Instrument sensitivity, calibration, and counting efficiency are only the starting point

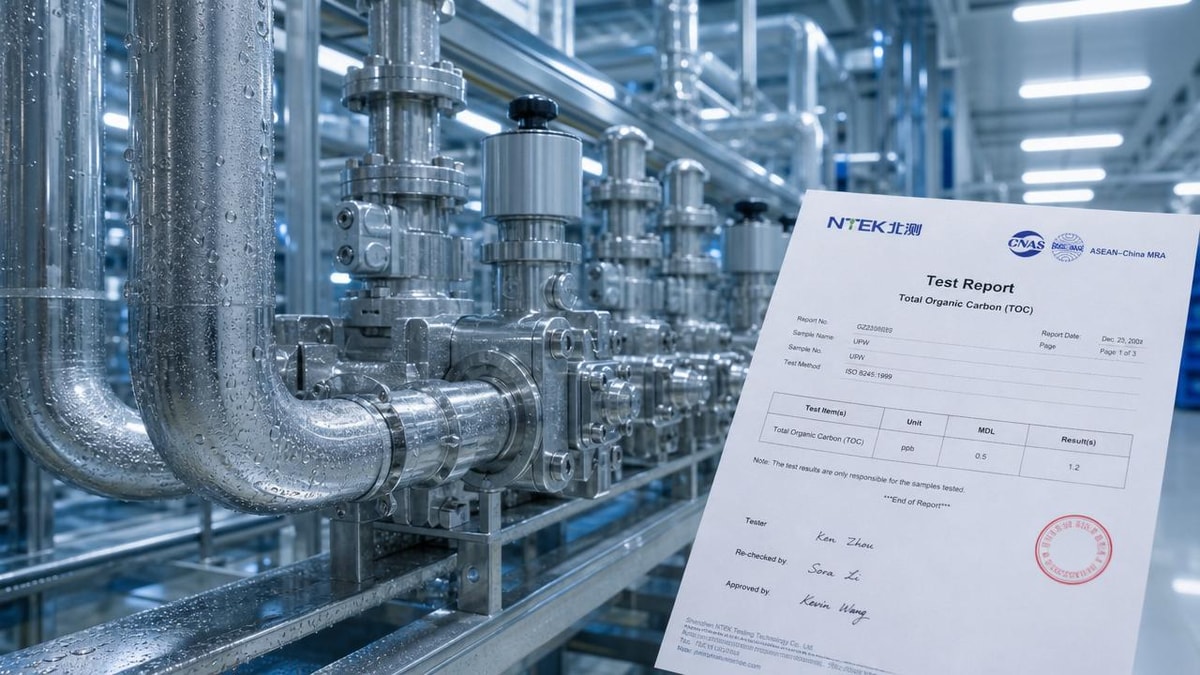

The first technical checkpoint is whether the selected instrument is appropriate for the particle size threshold of interest. Not all counters have the same lower detection limit, optical design, flow stability, or counting efficiency near the cutoff size. If a facility claims strong sub-micron control, but the instrument’s effective performance degrades close to that threshold, the reported data may understate risk. Evaluators should review calibration certificates, channel definitions, coincidence loss limits, and the intended use case of the counter.

Calibration quality also deserves closer attention than it often gets. A valid calibration date is necessary but not sufficient. What matters is traceability, calibration method, challenge aerosol characteristics, and whether the instrument has been verified after transport, maintenance, or filter replacement. In critical environments, a particle counter that has drifted slightly can materially change pass/fail outcomes, especially near alert or action limits. Post-service verification and routine zero checks should be part of the review.

Flow accuracy is another major factor. If the instrument flow rate is unstable, the sampled air volume becomes uncertain, and concentration calculations lose reliability. This is particularly problematic when comparing results from different sites or vendors. A technically sound evaluation should confirm not just calibration status, but also operational health: flow control, internal pump performance, optics cleanliness, and any alarms or deviations recorded in the instrument history.

Sampling location is often the biggest hidden source of error

Where the air is sampled can matter more than the nominal cleanliness class of the room. Sub-micron particles are not distributed uniformly, especially in spaces with process equipment, operator movement, return-air patterns, or local heat loads. A sample taken directly under a stable laminar supply may produce an excellent result while missing contamination generated at the tool interface, transfer path, or critical work zone. For technical evaluators, location relevance is a stronger indicator of measurement quality than location convenience.

The key question is whether the sample point represents the actual exposure risk to product, process, or critical surface. In semiconductor and advanced manufacturing environments, that may mean measuring near FOUP interfaces, wafer handling zones, or mini-environment access points. In pharmaceutical and life-science settings, it may mean sampling in areas that reflect aseptic intervention risk rather than background supply air. If the sampling point is chosen for easy compliance rather than process relevance, the result may be formally acceptable but operationally weak.

Probe orientation also affects aspiration efficiency. If the inlet is not aligned properly with airflow direction, certain particle sizes may be overcounted or undercounted. In unidirectional flow environments, isokinetic considerations are especially important. Even when exact isokinetic sampling is not fully achievable, evaluators should verify that inlet design and placement minimize bias. Poor probe orientation is a subtle but frequent reason why data from two otherwise similar tests do not agree.

Airflow behavior can distort counts far more than many reports admit

Airflow is one of the dominant drivers of sub-micron measurement accuracy because it governs particle transport, dilution, deposition, and resuspension. Stable unidirectional flow supports more predictable particle behavior, while turbulence, eddies, and localized recirculation create inconsistent readings. This means that contamination data cannot be interpreted in isolation from airflow performance. A room with excellent filters but unstable flow patterns may produce less trustworthy particle data than a slightly less efficient system with better aerodynamic control.

Technical evaluators should look at diffuser layout, FFU performance consistency, return-air positioning, pressure differentials, and the impact of obstacles such as process tools, partitions, carts, and operators. A common failure mode occurs when airflow is validated at commissioning but altered later by equipment additions or operational changes. The contamination measurement protocol remains the same, but the air behavior around the sample point has shifted. Without correlating counts to airflow conditions, teams may misread the cause of variation.

Short-term disturbances are equally important. Door openings, maintenance activity, startup transients, and occupancy changes can all produce count spikes that do not represent steady-state performance. That does not mean those spikes are irrelevant. It means they should be classified correctly. For engineering comparison, readers need to know whether the reported value reflects at-rest, operational, recovery, or transient conditions. Accuracy depends on matching the test condition to the intended decision.

Environmental stability matters: temperature, humidity, pressure, and vibration all play a role

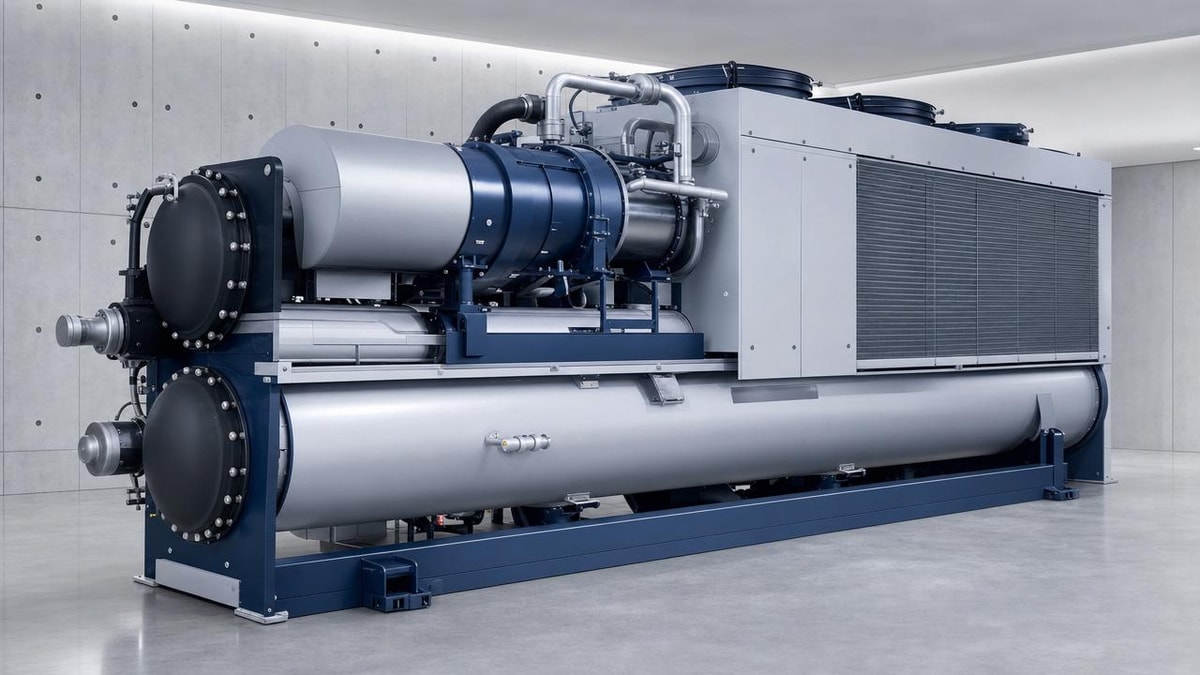

In high-performance environments, sub-micron contamination does not behave independently from other environmental variables. Temperature drift can alter airflow density patterns and process heat plumes. Relative humidity can influence electrostatic charge behavior and particle adhesion. Pressure instability can disrupt room balance and migration pathways. Vibration can affect both sensitive processes and the measurement platform itself, especially in tool-level or mini-environment testing.

This is one reason advanced facilities increasingly integrate particle monitoring with broader environmental telemetry. A particle excursion means more when it can be correlated with a pressure dip, fan-speed shift, chilled-water event, or door interlock cycle. For evaluators, this cross-correlation is powerful because it turns isolated contamination data into diagnosable operational evidence. It becomes easier to tell whether the count change reflects a real cleanliness issue, a control-system fluctuation, or a measurement artifact.

Humidity control deserves special emphasis. In some environments, excessively low humidity increases electrostatic attraction and particle retention on surfaces, followed by episodic release. In others, higher humidity may affect process sensitivity or encourage different contamination behavior. Accuracy improves when measurements are interpreted together with the environmental envelope during the test, rather than as standalone particle numbers detached from context.

Sample duration, air volume, and timing determine whether the data is representative

Sub-micron counts are highly sensitive to statistical treatment. If sample time is too short, the number of detected particles may be too small to support reliable comparison, especially in very clean areas. Evaluators should pay attention to total sampled volume, the number of replicate samples, and whether the test design aligns with the confidence needed for the decision. A single brief sample may be enough for troubleshooting a gross issue, but not for qualifying a critical environment.

Timing is equally important. Measurements taken immediately after cleaning, before occupancy, or during a production lull will naturally differ from measurements collected during active operation. Neither is inherently wrong, but each answers a different question. Technical evaluators should insist that reports clearly define the operational state of the space and explain why that state is relevant. Otherwise, two facilities may appear incomparable simply because they were tested under different conditions.

Trend data often provides more insight than isolated snapshots. When contamination counts are reviewed over time, recurring patterns become visible: startup spikes, end-of-shift drift, maintenance-related excursions, or seasonal environmental effects. This is particularly useful when evaluating the long-term capability of a cleanroom or HVAC design. A single low count is not strong proof of control. Stable performance across repeated conditions is far more persuasive.

Tubing, fittings, and transport losses can quietly bias sub-micron readings

Whenever remote sampling is used, tubing design becomes a critical part of measurement accuracy. At sub-micron sizes, particle transport losses can occur due to diffusion, electrostatic effects, bends, rough internal surfaces, and excessive line length. These losses may cause undercounting before the aerosol even reaches the instrument. In some systems, the sampling architecture itself becomes the dominant error source.

Evaluators should examine tubing diameter, material, routing complexity, bend radius, connection integrity, and the total transit distance from sampling point to counter. Conductive or anti-static materials may be needed depending on the application. Sharp bends and long horizontal runs should be questioned. If a supplier presents impressive data from a remote manifold but does not quantify transport efficiency, the measurement confidence may be lower than the report suggests.

Response time also matters in manifolded or switched monitoring systems. Delays in purge and stabilization can cause carryover between points or missed transient events. A technically credible monitoring design should specify how long each line is purged, how sample integrity is maintained, and what validation was performed to confirm that the remote architecture does not materially distort the result.

Operator discipline and protocol consistency often separate credible data from weak data

Even with strong instruments and stable infrastructure, poor procedural discipline can reduce data value. Common problems include inconsistent probe placement, undocumented changes in test sequence, improper warm-up, failure to verify zero counts, and inadequate recording of room state. In highly controlled environments, small procedural deviations can create enough uncertainty to weaken engineering conclusions or trigger unnecessary investigations.

For this reason, technical evaluators should review standard operating procedures and not just final reports. A sound protocol defines pre-test conditions, equipment checks, sampling positions, durations, operational state, acceptance criteria, and handling of outliers or interruptions. It also documents whether the environment was at rest, in operation, or undergoing recovery. Without this structure, repeatability suffers and comparison across tests becomes difficult.

Training is part of accuracy. Personnel should understand not only how to use the instrument, but why specific steps matter. When teams know how airflow direction, occupancy, and tubing layout affect results, they are more likely to avoid hidden sources of bias. In this sense, measurement accuracy is also a competence issue, not just a hardware issue.

How to evaluate whether contamination data is decision-grade

For technical assessment purposes, a useful approach is to ask five questions. Was the instrument appropriate and verified? Was the sample location relevant to actual contamination risk? Were airflow and environmental conditions stable and documented? Was the sample duration sufficient for the cleanliness level being assessed? And were the procedures repeatable and traceable? If any of these answers are weak, the data should be treated with caution.

It is also worth distinguishing between compliance-grade data and engineering-grade data. Compliance-grade data may be enough to demonstrate that a room passed a specified classification under a defined condition. Engineering-grade data goes further. It helps explain why performance looks the way it does, whether the design is robust under operational stress, and where future risk may emerge. For vendor comparisons, retrofit decisions, or high-value infrastructure investments, engineering-grade data is usually the more useful standard.

In advanced sectors served by organizations such as G-ICE, this distinction matters because invisible performance limits often define strategic outcomes. Cleanliness data must support not only present qualification, but also thermal stability, process consistency, ESG accountability, and long-term operational resilience. That requires measurement systems designed for insight, not just checkbox reporting.

Conclusion: accurate sub-micron measurement depends on the entire measurement ecosystem

The most important takeaway is simple: Sub-Micron Contamination measurement accuracy is determined by the full measurement ecosystem, not by the particle counter alone. Instrument sensitivity, calibration, and flow control matter, but so do sample location, airflow behavior, environmental stability, tubing losses, timing, and operator discipline. In high-spec environments, these factors interact, and overlooking any one of them can produce data that appears credible while masking real contamination risk.

For technical evaluators, the best path is to treat contamination results as evidence that must be qualified by context. Ask how the measurement was made, whether it reflects the actual exposure zone, and whether the environmental and procedural conditions support repeatable interpretation. When those questions are answered clearly, the data becomes far more valuable for procurement, compliance, validation, and root-cause analysis.

Ultimately, defensible decisions come from defensible measurements. The cleaner the environment and the smaller the particle threshold, the less room there is for hidden error. Teams that understand what truly affects accuracy are better equipped to compare systems fairly, identify weak controls early, and build contamination strategies that hold up under both operational and regulatory scrutiny.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.